YOLOYOLOV11_0">经典目标检测YOLO系列(一)实现YOLOV1网络(1)总体架构

实现原版的YOLOv1并没有多大的意义,因此,根据《YOLO目标检测》(ISBN:9787115627094)一书,在不脱离YOLOv1的大部分核心理念的前提下,重构一款较新的YOLOv1检测器,来对YOLOV1有更加深刻的认识。

书中源码连接:GitHub - yjh0410/RT-ODLab: YOLO Tutorial

对比原始YOLOV1网络,主要改进点如下:

- 将主干网络替换为ResNet-18

- 添加了后续YOLO中使用的neck,即SPPF模块

- 删除全连接层,修改为

检测头+预测层的组合,并且使用普遍用在RetinaNet、FCOS、YOLOX等通用目标检测网络中的解耦检测头(Decoupled head) - 修改损失函数,将YOLOV1原本的MSE loss,分类分支替换为BCE loss,回归分支替换为GIou loss。

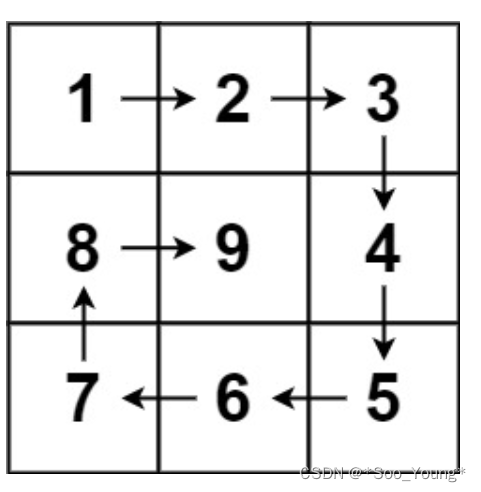

我们按照现在目标检测的整体架构,来搭建自己的YOLOV1网络,概览图如下:

1、替换主干网络

- 使用ResNet-18作为主干网络(backbone),替换YOLOV1原版的GoogLeNet风格的主干网络。替换后,图片的下采样从64倍,降低为32倍。我们更改图像大小由原版中的448×448变为416×416。

- 这里不同于分类网络,我们去掉网络中的

平均池化层以及分类层。一张图片经过主干网络得到13×13×512的特征图,相对于原本输出的7×7网格,这里输出的网格要更加精细。

# RT-ODLab/models/detectors/yolov1/yolov1_backbone.py

import torch

import torch.nn as nn

import torch.utils.model_zoo as model_zoo

# 只会导入 __all__ 列出的成员,可以其他成员都被排除在外

__all__ = ['ResNet', 'resnet18', 'resnet34', 'resnet50', 'resnet101', 'resnet152']

model_urls = {

'resnet18': 'https://download.pytorch.org/models/resnet18-5c106cde.pth',

'resnet34': 'https://download.pytorch.org/models/resnet34-333f7ec4.pth',

'resnet50': 'https://download.pytorch.org/models/resnet50-19c8e357.pth',

'resnet101': 'https://download.pytorch.org/models/resnet101-5d3b4d8f.pth',

'resnet152': 'https://download.pytorch.org/models/resnet152-b121ed2d.pth',

}

# --------------------- Basic Module -----------------------

def conv3x3(in_planes, out_planes, stride=1):

"""3x3 convolution with padding"""

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=1, bias=False)

def conv1x1(in_planes, out_planes, stride=1):

"""1x1 convolution"""

return nn.Conv2d(in_planes, out_planes, kernel_size=1, stride=stride, bias=False)

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = conv1x1(inplanes, planes)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = conv3x3(planes, planes, stride)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = conv1x1(planes, planes * self.expansion)

self.bn3 = nn.BatchNorm2d(planes * self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

# --------------------- ResNet -----------------------

class ResNet(nn.Module):

def __init__(self, block, layers, zero_init_residual=False):

super(ResNet, self).__init__()

self.inplanes = 64

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

# Zero-initialize the last BN in each residual branch,

# so that the residual branch starts with zeros, and each residual block behaves like an identity.

# This improves the model by 0.2~0.3% according to https://arxiv.org/abs/1706.02677

if zero_init_residual:

for m in self.modules():

if isinstance(m, Bottleneck):

nn.init.constant_(m.bn3.weight, 0)

elif isinstance(m, BasicBlock):

nn.init.constant_(m.bn2.weight, 0)

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

conv1x1(self.inplanes, planes * block.expansion, stride),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for _ in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

"""

Input:

x: (Tensor) -> [B, C, H, W]

Output:

c5: (Tensor) -> [B, C, H/32, W/32]

"""

c1 = self.conv1(x) # [B, C, H/2, W/2]

c1 = self.bn1(c1) # [B, C, H/2, W/2]

c1 = self.relu(c1) # [B, C, H/2, W/2]

c2 = self.maxpool(c1) # [B, C, H/4, W/4]

c2 = self.layer1(c2) # [B, C, H/4, W/4]

c3 = self.layer2(c2) # [B, C, H/8, W/8]

c4 = self.layer3(c3) # [B, C, H/16, W/16]

c5 = self.layer4(c4) # [B, C, H/32, W/32]

return c5

# --------------------- Fsnctions -----------------------

def resnet18(pretrained=False, **kwargs):

"""Constructs a ResNet-18 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(BasicBlock, [2, 2, 2, 2], **kwargs)

if pretrained:

# strict = False as we don't need fc layer params.

model.load_state_dict(model_zoo.load_url(model_urls['resnet18']), strict=False)

return model

def resnet34(pretrained=False, **kwargs):

"""Constructs a ResNet-34 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(BasicBlock, [3, 4, 6, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet34']), strict=False)

return model

def resnet50(pretrained=False, **kwargs):

"""Constructs a ResNet-50 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 4, 6, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet50']), strict=False)

return model

def resnet101(pretrained=False, **kwargs):

"""Constructs a ResNet-101 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 4, 23, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet101']), strict=False)

return model

def resnet152(pretrained=False, **kwargs):

"""Constructs a ResNet-152 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 8, 36, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet152']))

return model

## build resnet

def build_backbone(model_name='resnet18', pretrained=False):

if model_name == 'resnet18':

model = resnet18(pretrained)

feat_dim = 512

elif model_name == 'resnet34':

model = resnet34(pretrained)

feat_dim = 512

elif model_name == 'resnet50':

model = resnet34(pretrained)

feat_dim = 2048

elif model_name == 'resnet101':

model = resnet34(pretrained)

feat_dim = 2048

return model, feat_dim

if __name__=='__main__':

model, feat_dim = build_backbone(model_name='resnet18', pretrained=True)

print(model)

input = torch.randn(1, 3, 416, 416)

print(model(input).shape)

2、添加neck

-

在原版的YOLOv1中,是没有Neck网络的概念的,但随着目标检测领域的发展,相关框架的成熟,一个通用目标检测网络结构可以被划分为Backbone、Neck、Head三大部分。当前的YOLO工作也符合这一设计。

-

因此,我们遵循当前主流的设计理念,为我们的YOLOv1添加一个Neck网络。这里,我们选择YOLOV5版本中所用的SPPF模块。

SPPF主要的代码如下:

# RT-ODLab/models/detectors/yolov1/yolov1_neck.py

import torch

import torch.nn as nn

from .yolov1_basic import Conv

# Spatial Pyramid Pooling - Fast (SPPF) layer for YOLOv5 by Glenn Jocher

class SPPF(nn.Module):

"""

This code referenced to https://github.com/ultralytics/yolov5

"""

def __init__(self, in_dim, out_dim, expand_ratio=0.5, pooling_size=5, act_type='lrelu', norm_type='BN'):

super().__init__()

inter_dim = int(in_dim * expand_ratio)

self.out_dim = out_dim

# 1×1卷积,通道降维

self.cv1 = Conv(in_dim, inter_dim, k=1, act_type=act_type, norm_type=norm_type)

# 1×1卷积,通道降维

self.cv2 = Conv(inter_dim * 4, out_dim, k=1, act_type=act_type, norm_type=norm_type)

# 5×5最大池化

self.m = nn.MaxPool2d(kernel_size=pooling_size, stride=1, padding=pooling_size // 2)

def forward(self, x):

# 1、经过Conv-BN-LeakyReLU

x = self.cv1(x)

# 2、串行经过第1个5×5的最大池化

y1 = self.m(x)

# 3、串行经过第2个5×5的最大池化

y2 = self.m(y1)

# 4、串行经过第3个5×5的最大池化

y3 = self.m(y2)

# 5、将上面4个得到的结果concat

concat_y = torch.cat((x, y1, y2, y3), 1)

# 6、再经过一个Conv-BN-LeakyReLU

return self.cv2(concat_y)

def build_neck(cfg, in_dim, out_dim):

model = cfg['neck']

print('==============================')

print('Neck: {}'.format(model))

# build neck

if model == 'sppf':

neck = SPPF(

in_dim=in_dim,

out_dim=out_dim,

expand_ratio=cfg['expand_ratio'],

pooling_size=cfg['pooling_size'],

act_type=cfg['neck_act'],

norm_type=cfg['neck_norm']

)

return neck

3、Detection Head网络

- 原版的YOLOv1采用了全连接层来完成最终的处理,即将此前卷积输出的二维 H×W 特征图拉平(flatten操作)成一维 HW 向量,然后接全连接层得到4096维的一维向量。

flatten操作会破坏特征的空间结构信息,因此,我们抛掉这里的flatten操作,改用当下主流的基于卷积的检测头。- 具体来说,我们使用普遍用在RetinaNet、FCOS、YOLOX等通用目标检测网络中的

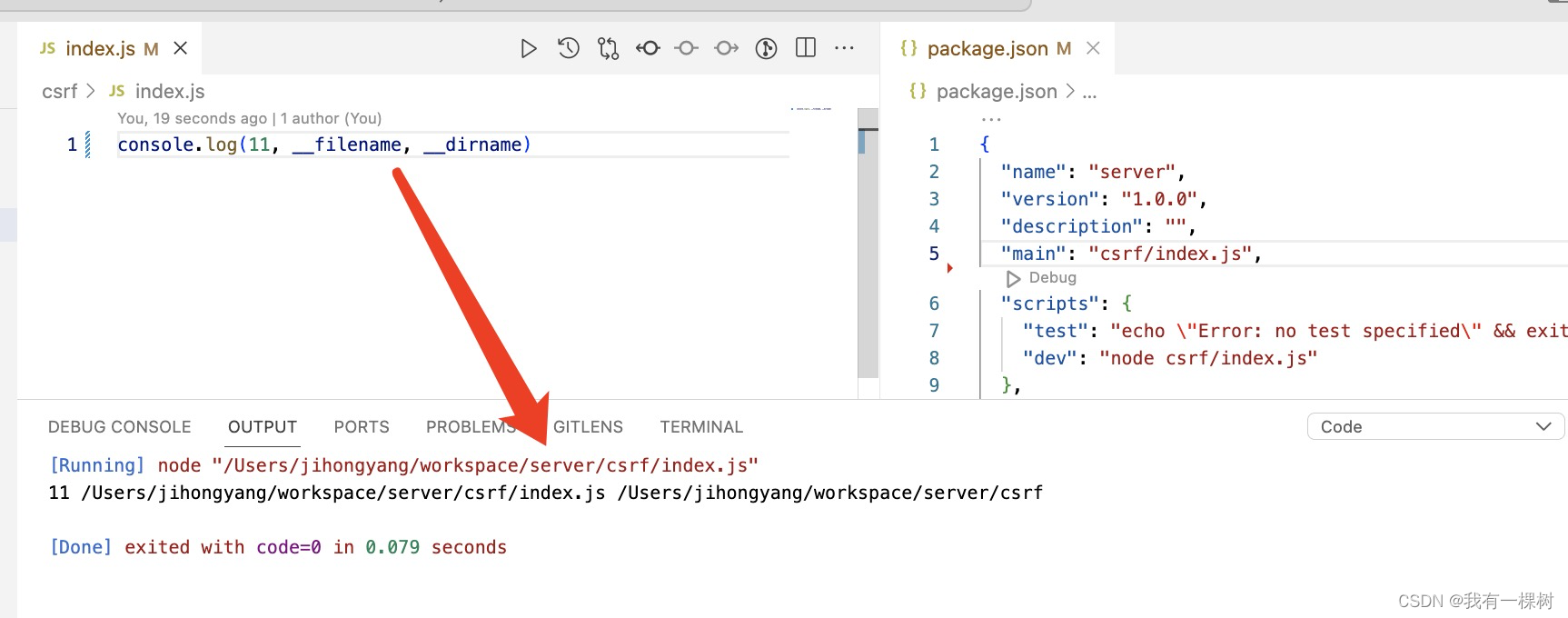

解耦检测头(Decoupled head),即使用两条并行的分支,分别用于提取类别特征和位置特征,两条分支都由卷积层构成。 - 下图展示了我们所采用的Decoupled head以及后面的预测层结构。

# RT-ODLab/models/detectors/yolov1/yolov1_head.py

import torch

import torch.nn as nn

try:

from .yolov1_basic import Conv

except:

from yolov1_basic import Conv

class DecoupledHead(nn.Module):

def __init__(self, cfg, in_dim, out_dim, num_classes=80):

super().__init__()

print('==============================')

print('Head: Decoupled Head')

self.in_dim = in_dim

self.num_cls_head=cfg['num_cls_head']

self.num_reg_head=cfg['num_reg_head']

self.act_type=cfg['head_act']

self.norm_type=cfg['head_norm']

# cls head

cls_feats = []

self.cls_out_dim = max(out_dim, num_classes)

for i in range(cfg['num_cls_head']):

if i == 0:

cls_feats.append(

Conv(in_dim, self.cls_out_dim, k=3, p=1, s=1,

act_type=self.act_type,

norm_type=self.norm_type,

depthwise=cfg['head_depthwise'])

)

else:

cls_feats.append(

Conv(self.cls_out_dim, self.cls_out_dim, k=3, p=1, s=1,

act_type=self.act_type,

norm_type=self.norm_type,

depthwise=cfg['head_depthwise'])

)

# reg head

reg_feats = []

self.reg_out_dim = max(out_dim, 64)

for i in range(cfg['num_reg_head']):

if i == 0:

reg_feats.append(

Conv(in_dim, self.reg_out_dim, k=3, p=1, s=1,

act_type=self.act_type,

norm_type=self.norm_type,

depthwise=cfg['head_depthwise'])

)

else:

reg_feats.append(

Conv(self.reg_out_dim, self.reg_out_dim, k=3, p=1, s=1,

act_type=self.act_type,

norm_type=self.norm_type,

depthwise=cfg['head_depthwise'])

)

self.cls_feats = nn.Sequential(*cls_feats)

self.reg_feats = nn.Sequential(*reg_feats)

def forward(self, x):

"""

in_feats: (Tensor) [B, C, H, W]

"""

cls_feats = self.cls_feats(x)

reg_feats = self.reg_feats(x)

return cls_feats, reg_feats

# build detection head

def build_head(cfg, in_dim, out_dim, num_classes=80):

head = DecoupledHead(cfg, in_dim, out_dim, num_classes)

return head

4、预测层

检测头最后部分的预测层(Prediction layer)也要做相应的修改。

我们只要求YOLOv1在每一个网格只需要预测一个bbox,而非两个甚至更多的bbox。尽管原版的YOLOv1在每个网格预测两个bbox,但在推理阶段,每个网格最终只输出一个bbox,从结果上来看,这和每个网格只预测一个bbox是一样的。objectness的预测。我们在Decoupled head的类别分支的输出后面接一层1x1卷积去做objectness的预测,并在最后使用Sigmoid函数来输出objectness预测值。classificaton预测。遵循当下YOLO系列常用的方法,我们同样采用Sigmoid函数来输出classification预测值,即每个类别的置信度都是0~1。classificaton预测和objectness预测都采用Decoupled的同一分支。bbox regression预测。另一分支的位置特征就被用于预测边界框的偏移量,即我们使用另一层1x1卷积去处理检测头的位置特征,得到每个网格的边界框的偏移量预测。

## 预测层

self.obj_pred = nn.Conv2d(head_dim, 1, kernel_size=1)

self.cls_pred = nn.Conv2d(head_dim, num_classes, kernel_size=1)

self.reg_pred = nn.Conv2d(head_dim, 4, kernel_size=1)

YOLO_455">5、改进YOLO的详细网络图

# RT-ODLab/models/detectors/yolov1/yolov1.py

import torch

import torch.nn as nn

import numpy as np

from utils.misc import multiclass_nms

from .yolov1_backbone import build_backbone

from .yolov1_neck import build_neck

from .yolov1_head import build_head

# YOLOv1

class YOLOv1(nn.Module):

def __init__(self,

cfg,

device,

img_size=None,

num_classes=20,

conf_thresh=0.01,

nms_thresh=0.5,

trainable=False,

deploy=False,

nms_class_agnostic :bool = False):

super(YOLOv1, self).__init__()

# ------------------------- 基础参数 ---------------------------

self.cfg = cfg # 模型配置文件

self.img_size = img_size # 输入图像大小

self.device = device # cuda或者是cpu

self.num_classes = num_classes # 类别的数量

self.trainable = trainable # 训练的标记

self.conf_thresh = conf_thresh # 得分阈值

self.nms_thresh = nms_thresh # NMS阈值

self.stride = 32 # 网络的最大步长

self.deploy = deploy

self.nms_class_agnostic = nms_class_agnostic

# ----------------------- 模型网络结构 -------------------------

## 主干网络

self.backbone, feat_dim = build_backbone(

cfg['backbone'],

trainable & cfg['pretrained']

)

## 颈部网络

self.neck = build_neck(cfg, feat_dim, out_dim=512)

head_dim = self.neck.out_dim

## 检测头

self.head = build_head(cfg, head_dim, head_dim, num_classes)

## 预测层

self.obj_pred = nn.Conv2d(head_dim, 1, kernel_size=1)

self.cls_pred = nn.Conv2d(head_dim, num_classes, kernel_size=1)

self.reg_pred = nn.Conv2d(head_dim, 4, kernel_size=1)

def create_grid(self, fmp_size):

"""

用于生成G矩阵,其中每个元素都是特征图上的像素坐标。

"""

pass

def decode_boxes(self, pred, fmp_size):

"""

将txtytwth转换为常用的x1y1x2y2形式。

pred:回归预测参数

fmp_size:特征图宽和高

"""

pass

def postprocess(self, bboxes, scores):

"""

后处理代码,包括阈值筛选和非极大值抑制

1、滤掉低得分(边界框的score低于给定的阈值)的预测边界框;

2、滤掉那些针对同一目标的冗余检测。

Input:

bboxes: [HxW, 4]

scores: [HxW, num_classes]

Output:

bboxes: [N, 4]

score: [N,]

labels: [N,]

"""

pass

@torch.no_grad()

def inference(self, x):

# 测试阶段的前向推理代码

# 主干网络

feat = self.backbone(x)

# 颈部网络

feat = self.neck(feat)

# 检测头

cls_feat, reg_feat = self.head(feat)

# 预测层

obj_pred = self.obj_pred(cls_feat)

cls_pred = self.cls_pred(cls_feat)

reg_pred = self.reg_pred(reg_feat)

fmp_size = obj_pred.shape[-2:]

# 对 pred 的size做一些view调整,便于后续的处理

# [B, C, H, W] -> [B, H, W, C] -> [B, H*W, C]

obj_pred = obj_pred.permute(0, 2, 3, 1).contiguous().flatten(1, 2)

cls_pred = cls_pred.permute(0, 2, 3, 1).contiguous().flatten(1, 2)

reg_pred = reg_pred.permute(0, 2, 3, 1).contiguous().flatten(1, 2)

# 测试时,笔者默认batch是1,

# 因此,我们不需要用batch这个维度,用[0]将其取走。

obj_pred = obj_pred[0] # [H*W, 1]

cls_pred = cls_pred[0] # [H*W, NC]

reg_pred = reg_pred[0] # [H*W, 4]

# 每个边界框的得分

scores = torch.sqrt(obj_pred.sigmoid() * cls_pred.sigmoid())

# 解算边界框, 并归一化边界框: [H*W, 4]

bboxes = self.decode_boxes(reg_pred, fmp_size)

if self.deploy:

# [n_anchors_all, 4 + C]

outputs = torch.cat([bboxes, scores], dim=-1)

return outputs

else:

# 将预测放在cpu处理上,以便进行后处理

scores = scores.cpu().numpy()

bboxes = bboxes.cpu().numpy()

# 后处理

bboxes, scores, labels = self.postprocess(bboxes, scores)

return bboxes, scores, labels

def forward(self, x):

# 训练阶段的前向推理代码

if not self.trainable:

return self.inference(x)

else:

# 主干网络

feat = self.backbone(x)

# 颈部网络

feat = self.neck(feat)

# 检测头

cls_feat, reg_feat = self.head(feat)

# 预测层

obj_pred = self.obj_pred(cls_feat)

cls_pred = self.cls_pred(cls_feat)

reg_pred = self.reg_pred(reg_feat)

fmp_size = obj_pred.shape[-2:]

# 对 pred 的size做一些view调整,便于后续的处理

# [B, C, H, W] -> [B, H, W, C] -> [B, H*W, C]

obj_pred = obj_pred.permute(0, 2, 3, 1).contiguous().flatten(1, 2)

cls_pred = cls_pred.permute(0, 2, 3, 1).contiguous().flatten(1, 2)

reg_pred = reg_pred.permute(0, 2, 3, 1).contiguous().flatten(1, 2)

# decode bbox

box_pred = self.decode_boxes(reg_pred, fmp_size)

# 网络输出

outputs = {

"pred_obj": obj_pred, # (Tensor) [B, M, 1]

"pred_cls": cls_pred, # (Tensor) [B, M, C]

"pred_box": box_pred, # (Tensor) [B, M, 4]

"stride": self.stride, # (Int)

"fmp_size": fmp_size # (List) [fmp_h, fmp_w]

}

return outputs

到此,我们完成了YOLOv1网络的搭建,并且实现了前向推理。但是,在推理的代码中还遗留了几个重要的问题尚待处理:

- 如何从边界框偏移量reg_pred解耦出边界框坐标box_pred?

- 如何实现后处理操作?

- 如何计算训练阶段的损失?

当然还有数据集的加载,数据集如何增强,如何选择正样本进行训练等内容。